System Overview

Mechanical Sub-System

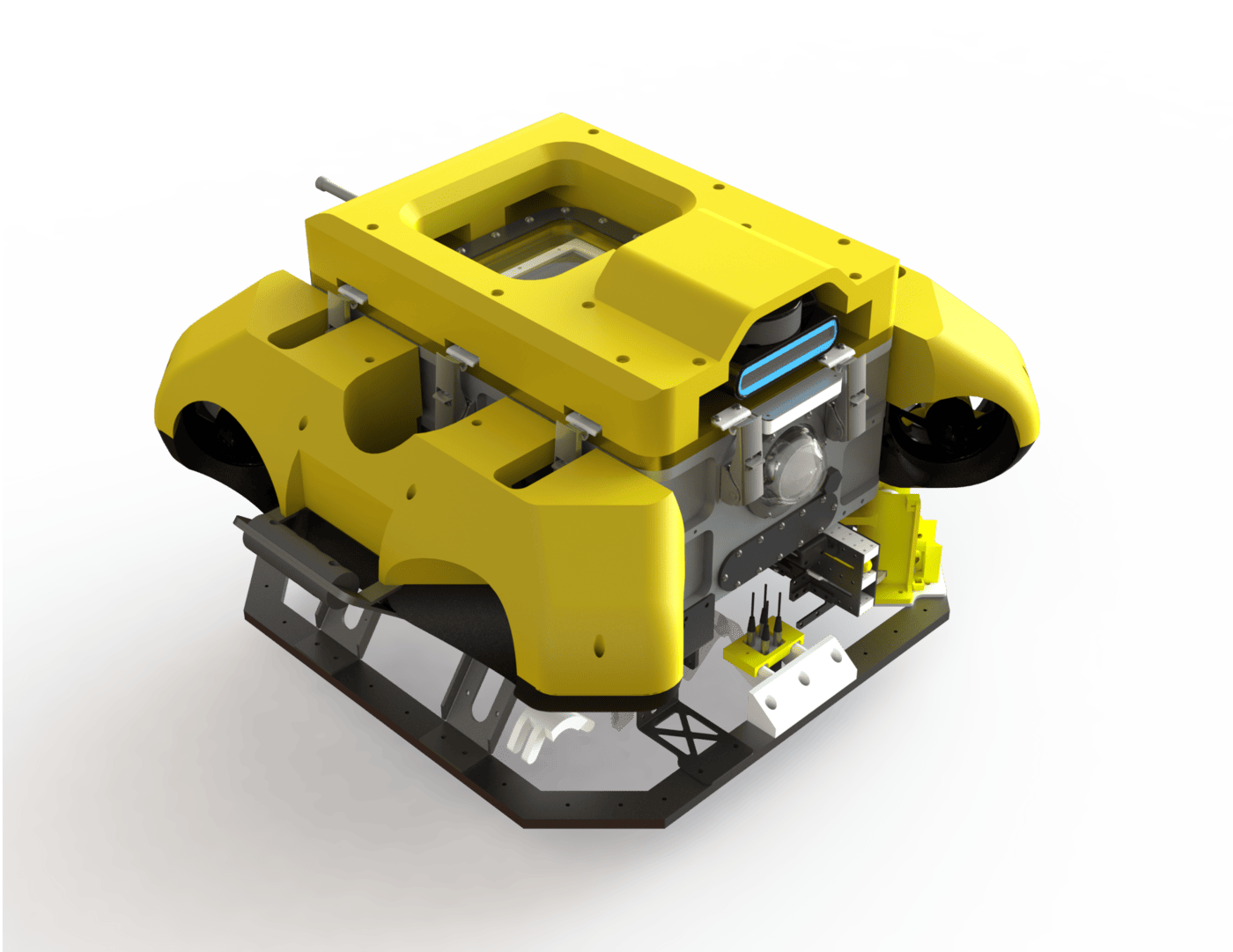

We chose a rectangular hull for BBAUV 4.0 to get efficient packing for internal components and electronics. A fibreglass float fits on the outside of the hull, making it much more resilient to external impacts while maintaining superior manoeuvrability. A 3D-printed shell was designed to fit below the fibreglass floats, and a new octagonal mounting frame also allows for more actuation modules to be mounted while still being protected.

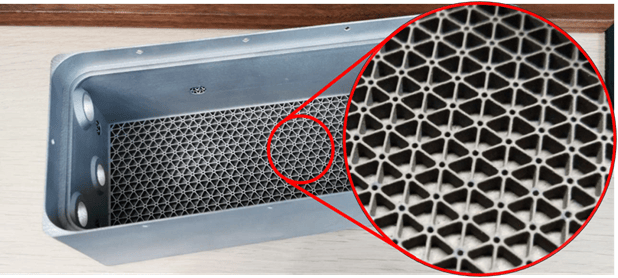

The battery hull was manufactured with novel 3D metal-printing technology; we also increased the rigidity-to-weight ratio by embedding lattices in the walls and base. Unlike traditional methods, 3D printing allows for tight corners, giving our battery a snug fit. Together, these factors significantly reduce the vehicle’s weight and volume.

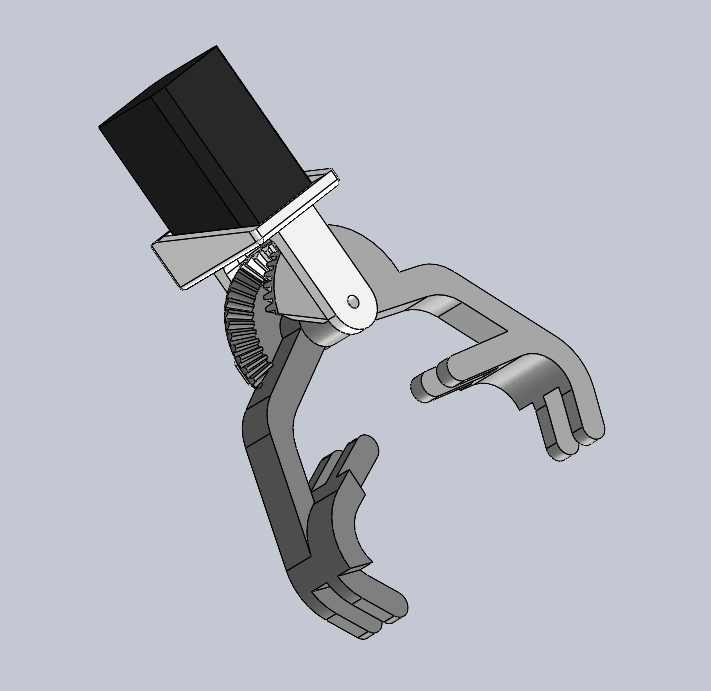

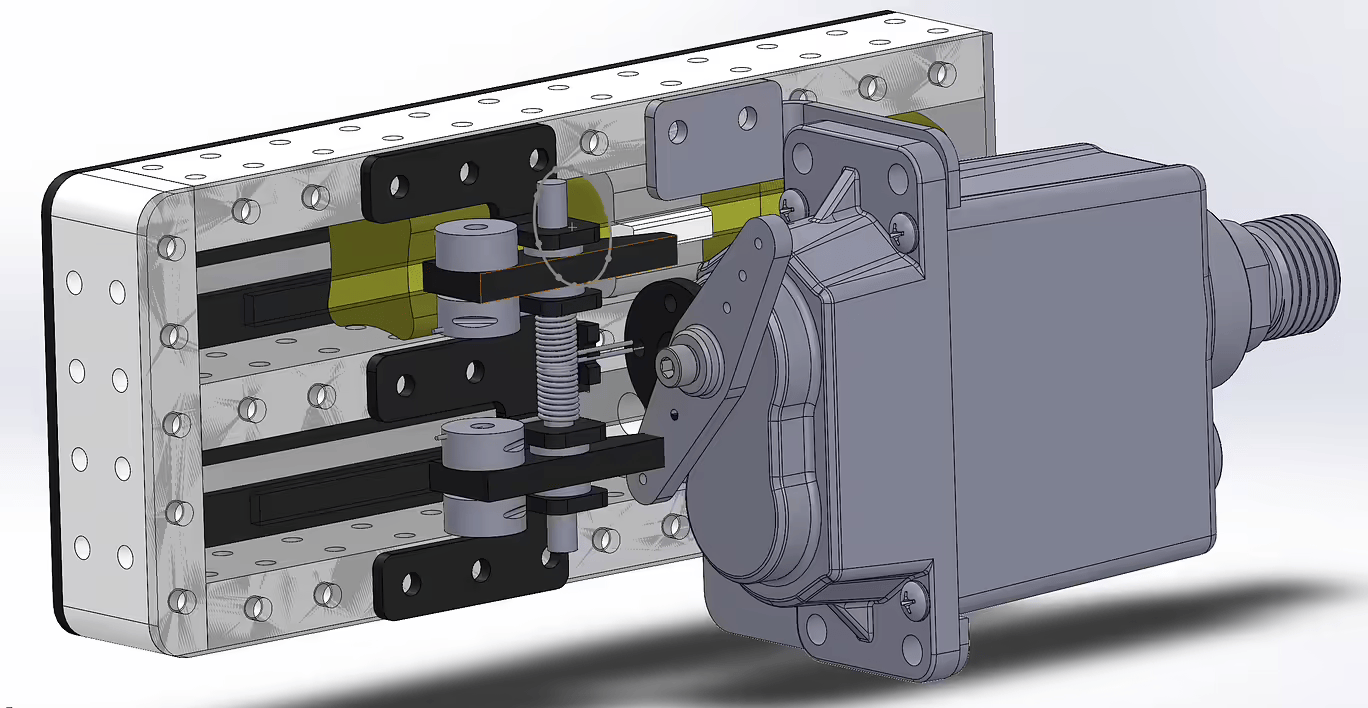

The bulky newton gripper of years past was replaced with a smaller claw, utilising bevel gears and a stepper motor; the claws tuck neatly under the hull to reduce their footprint. Our ball dropper and torpedo launcher use the Bluetrail underwater servo; while larger than our old custom servos, it markedly enhances the reliability and depth rating of both actuators.

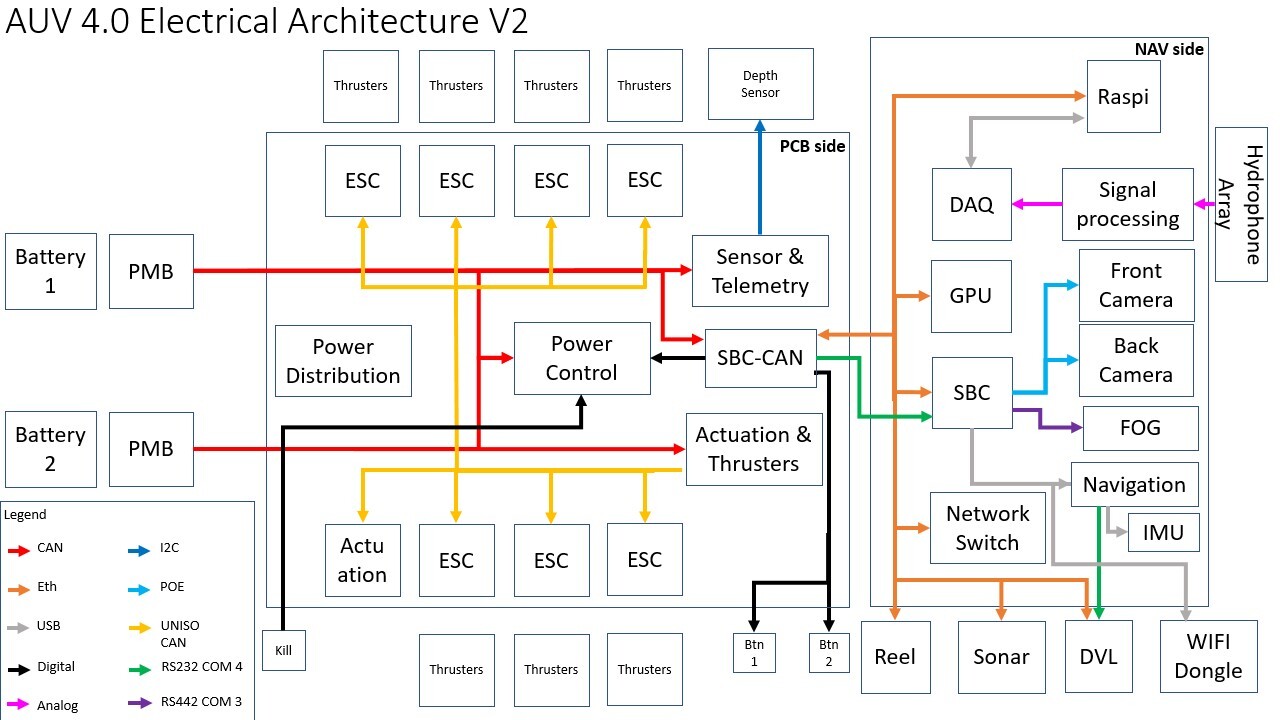

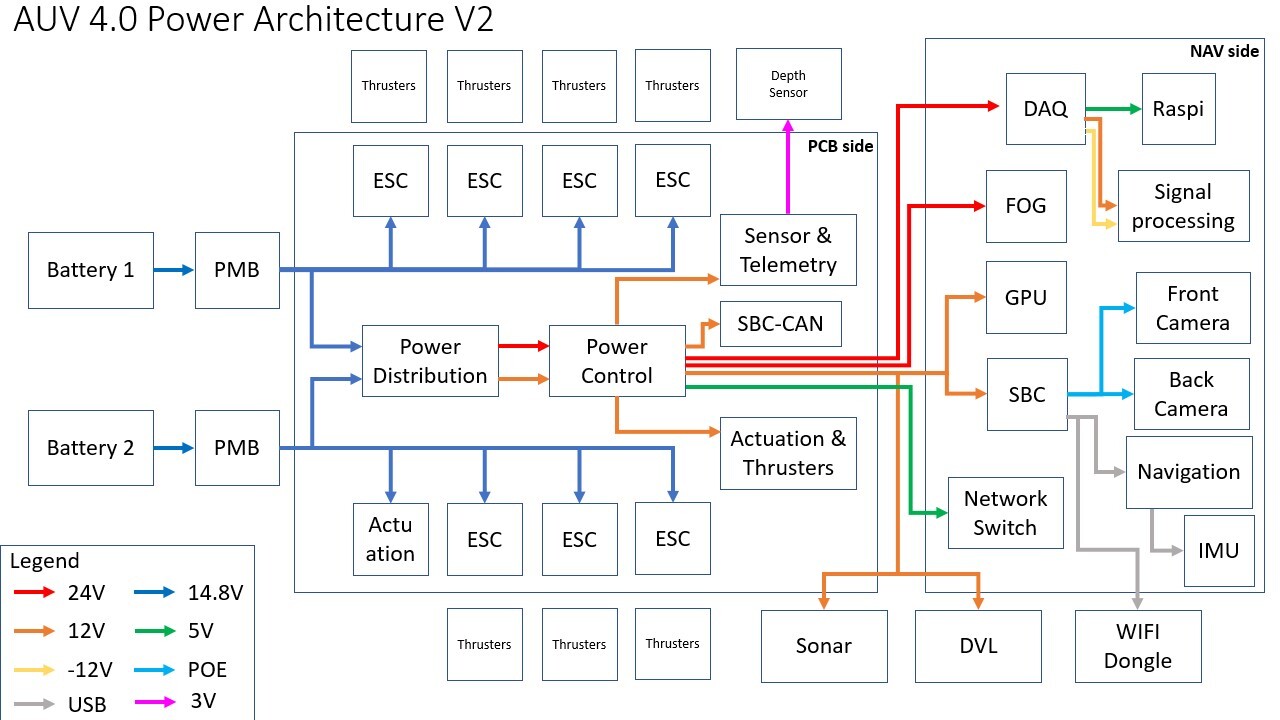

Electrical Sub-System

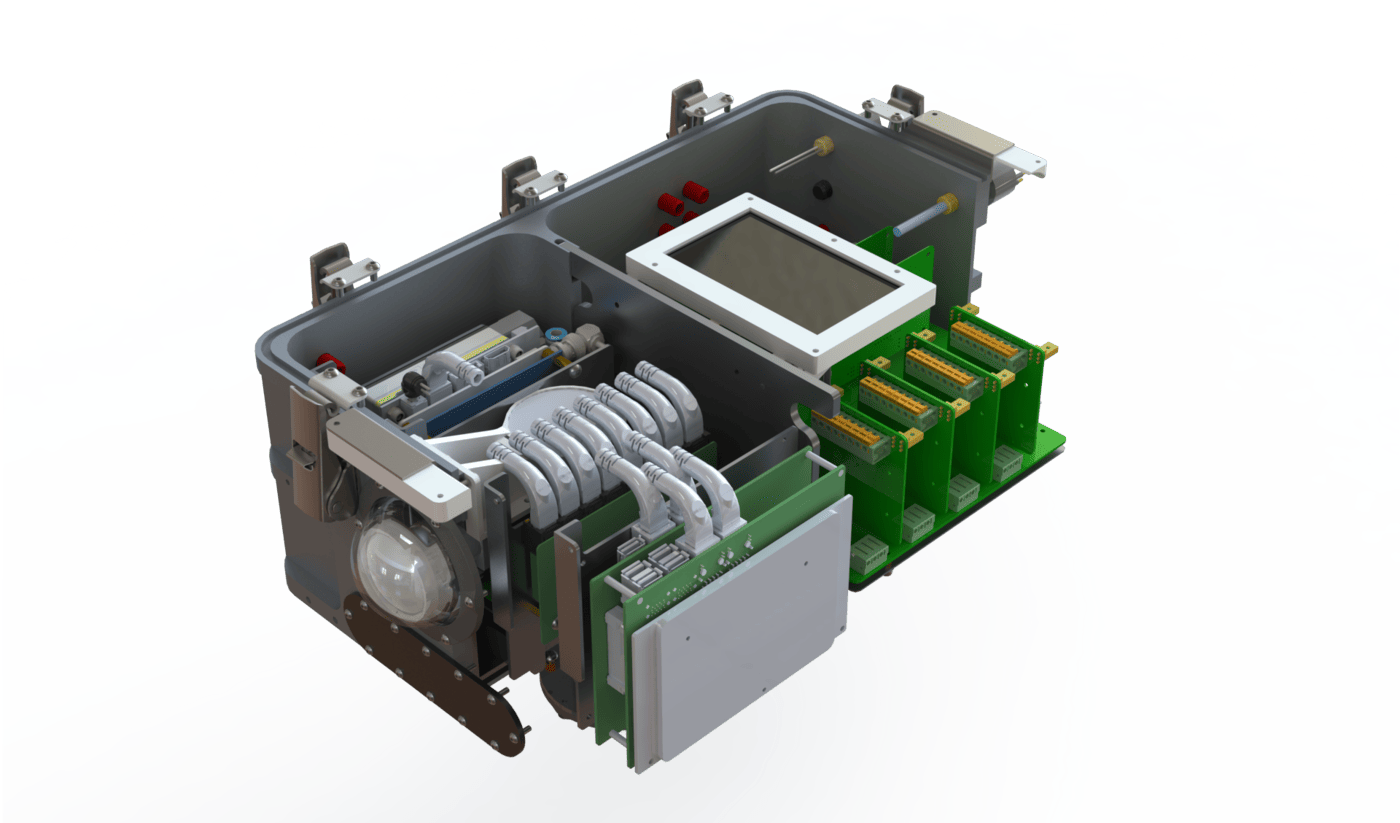

Our custom-designed Power Monitoring Board enables our control board to selectively prioritise and disable systems during low-power scenarios. Our batteries are hot-swappable by means of a load-balancer between them; the vehicle can stay running during swaps, minimising operational downtime.

Our backplane system gives the electrical system flexibility by separating the high- and low-level circuitry. These backplanes are easily accessed by opening the top lid, which lets us perform maintenance without being obstructed by other components. Each system can also function independently, allowing any failures to be quickly traced. It also isolates electrically sensitive equipment from the electrical noise generated by the other electronics.

A new fibre-optic gyroscope (FOG) was installed to complement our IMU, which has trouble when around ferrous objects, such as pool walls. The new sensor has a low rate of drift, and is much more impervious to external electromagnetic interference than our IMU.

Software Sub-System

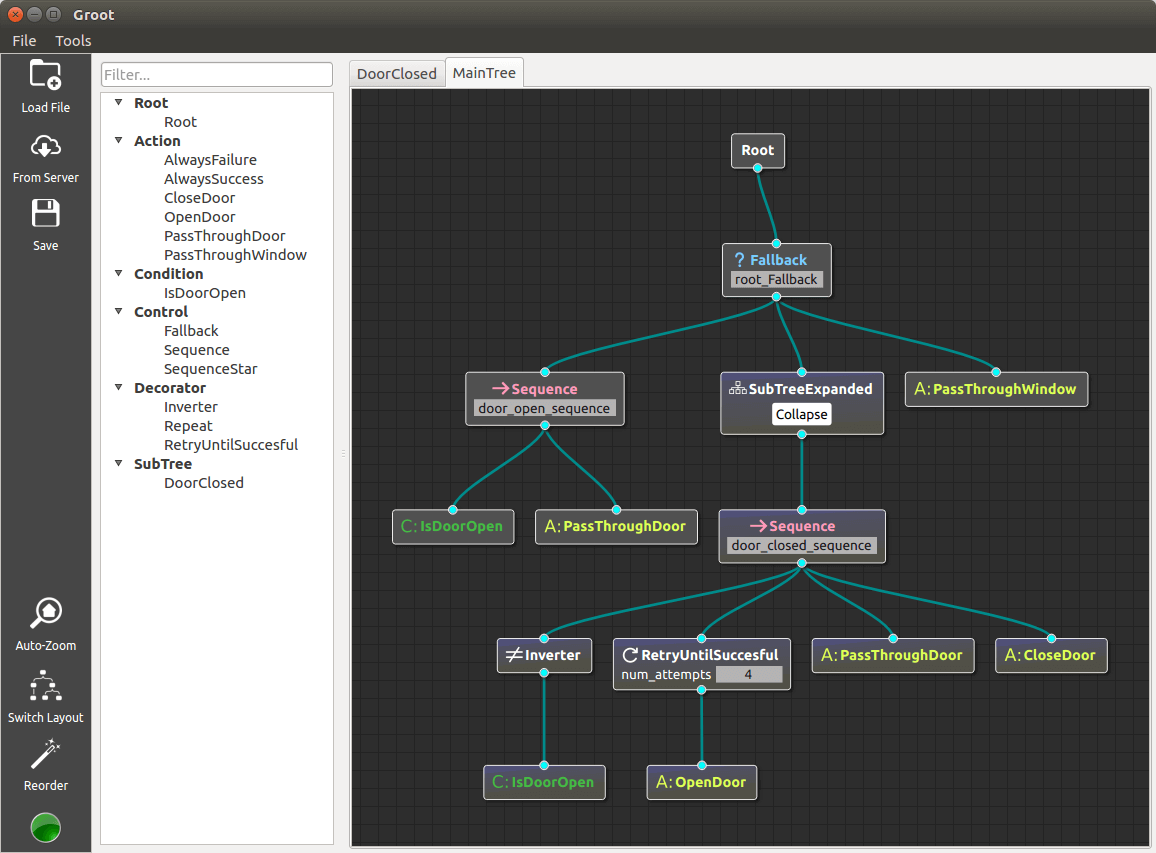

After a year of testing of our BT-based mission planner, we discovered several flaws with our initial implementation. The first version was based on the ROS 2 navigation stack, and suffered from being overly generic. Our new planner greatly simplifies the complicated node-adding process. In addition, nodes interfacing with ROS topics, services, etc. were rewritten to better fit the ROS 1 paradigm.

We completely revamped our machine learning pipeline this year. Instead of a mixture of Python 2 and Cython, we now use Python 3 for easier maintainability without sacrificing speed. We also refactored our existing YOLOv5-based architecture to allow the use of TensorRT, letting us leverage device-specific optimisations, maximising our Nvidia Jetson Xavier’s potential and gaining a 2x FPS increase.

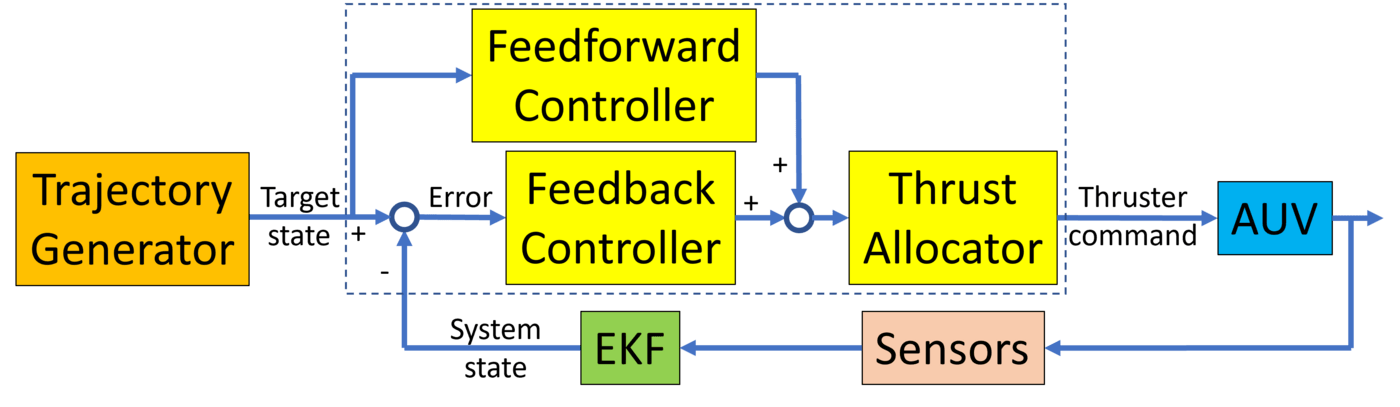

Lastly, we implemented a trajectory generator to create smooth continuous paths for the AUV to follow. The trajectories are calculated using linear segments with polynomic blend, with limits on the maximum velocity, acceleration, and jerk of the vehicle. This improved performance for distant setpoint goals by avoiding controller saturation.

Competition Strategy

For RoboSub 2022, we plan to deploy our BBAUV 4.0 to complete all competition tasks. Taking advantage of the lack of physical RoboSub competitions these past 2 years, we have iterated tirelessly on our newest vehicle, and have achieved feature parity with our older BBAUV 3.99.

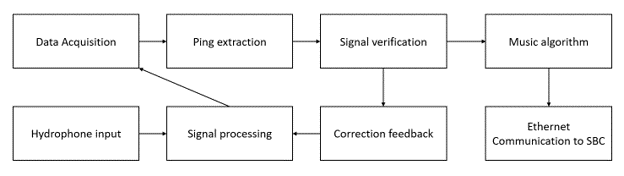

For our task strategy, we employ a sensor fusion approach; our vision pipeline combines sonar images with machine learning (ML) object detection to accurately localise and identify task objects, like the buoys in Make the Grade or the torpedo openings in Survive the Shootout. Thus our strategies for these, plus With Moxy and Choose Your Side, are similar: approach the targets using localisation from our vision pipeline before performing task-specific actions. For final alignment and adjustments, we exclusively use ML object detection, as our sonar cannot reliably detect objects at close proximity.

The Collecting and Cash or Smash tasks are also handled exclusively by ML object detection, since we are unable to use the sonar for bottom-facing tasks. We track the centroids of the detected objects, precisely adjusting the AUV’s alignment using our highly accurate Doppler Velocity Log, together with a revamped controls system. Since both tasks require grabbing actuation, we also designed a versatile grabber capable of performing both tasks, yet small enough to fit within our size constraints.

For more details, read our technical paper.